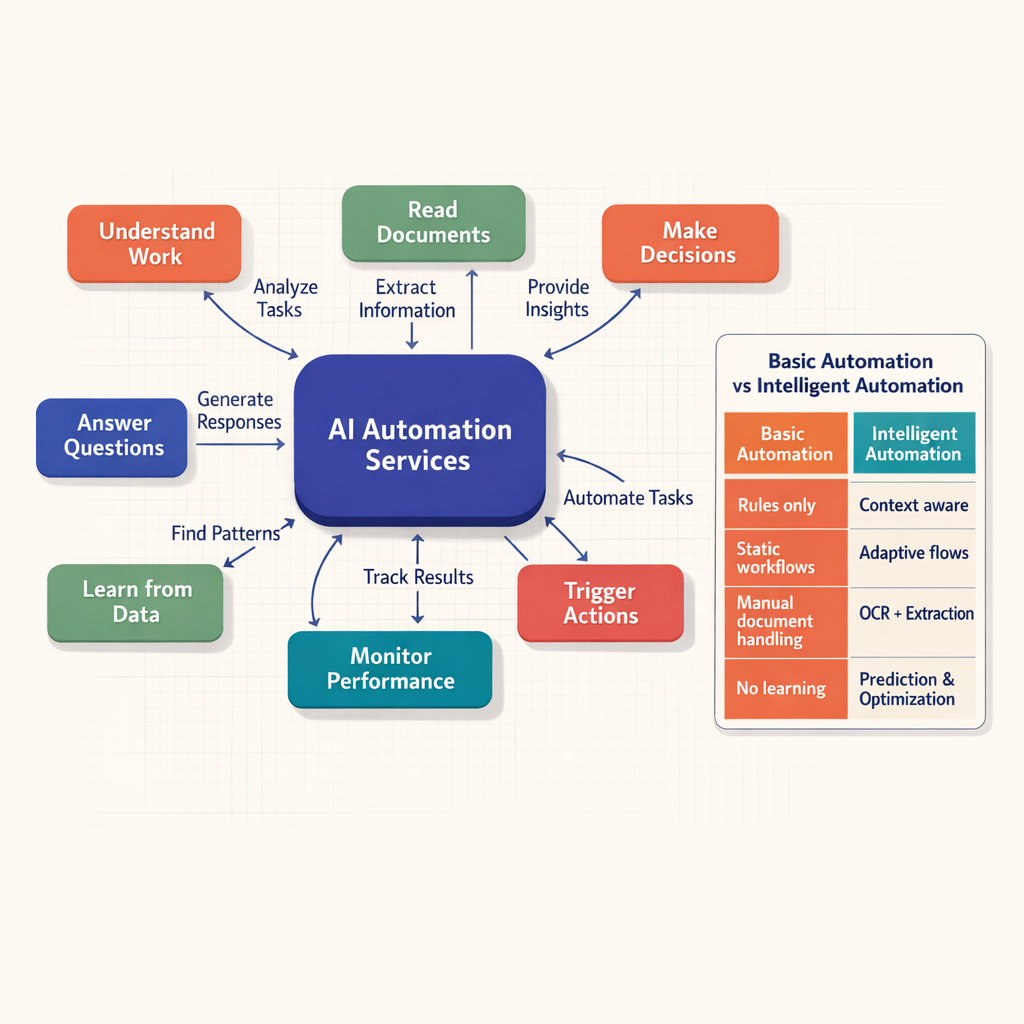

AI Automation Services: Transforming Workflows for 2023

Explore how AI automation services enhance efficiency and accuracy across business processes.

Explore how AI automation services enhance efficiency and accuracy across business processes.

AI automation services are professional services that design, build, integrate, and run AI-powered workflows that replace or support manual work. They combine strategy, models, APIs, data pipelines, and execution layers into usable ai automation solutions that drive measurable business outcomes.

Businesses buy these services to reduce repetitive effort, improve speed, increase accuracy, and make systems work together. The scope usually spans discovery, implementation, governance, monitoring, and optimization -- not just a chatbot or a standalone model.

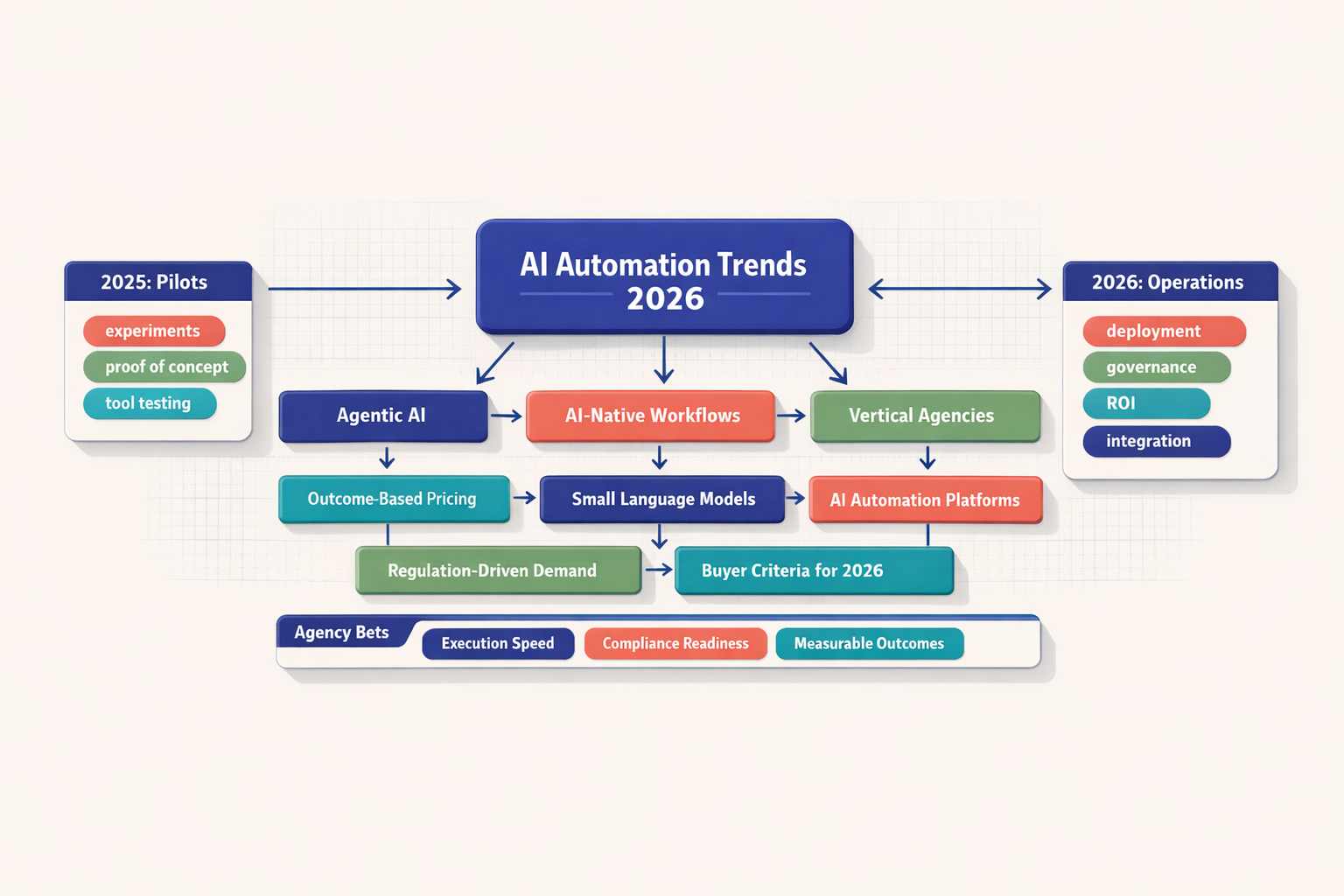

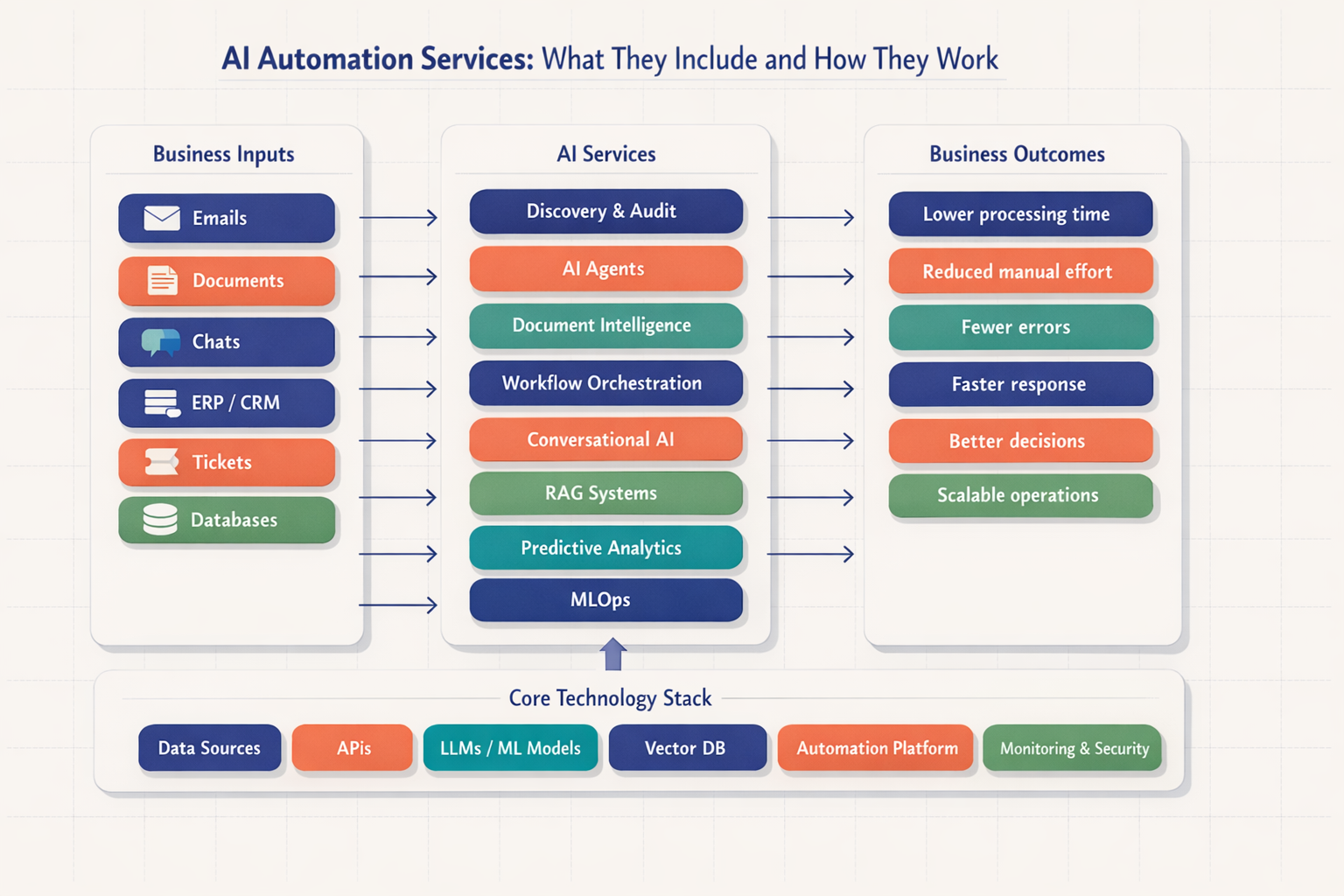

Architecture diagram showing emails, documents, chats, ERP systems, and databases feeding into discovery, AI agents, document intelligence, workflow orchestration, RAG, analytics, and MLOps, with business outcomes such as lower processing time and reduced manual effort

Architecture diagram showing emails, documents, chats, ERP systems, and databases feeding into discovery, AI agents, document intelligence, workflow orchestration, RAG, analytics, and MLOps, with business outcomes such as lower processing time and reduced manual effort

For modern businesses, ai automation services mean more than automating clicks or adding AI to a single screen. They are structured delivery services that turn fragmented work into connected, production-grade systems. That includes consulting, architecture, implementation, integration, testing, monitoring, and optimization. A buyer is not purchasing a model alone. A buyer is purchasing an operating capability.

At Imversion Technologies Pvt Ltd, a pattern we see often is that teams first ask for "AI" but actually need process redesign, data cleanup, system integration, and clear controls before any model creates value. That distinction matters. Automation reduces human error, but only if the workflow around the model is designed properly. In our work, the first workshops often reveal something simple but expensive: manual handoffs and inconsistent source fields are doing more damage than the lack of a model.

Basic automation follows fixed rules. If an invoice arrives, route it. If a field matches, update the record. If a ticket has a tag, assign it to a queue. Useful -- but limited.

AI-driven automation handles variability. It can classify unstructured emails, extract fields from mixed-format documents, summarize cases, recommend next actions, or search company knowledge before responding. What changes the outcome is the service layer around it: prompt design, retrieval logic, API integrations, guardrails, monitoring, and fallback paths.

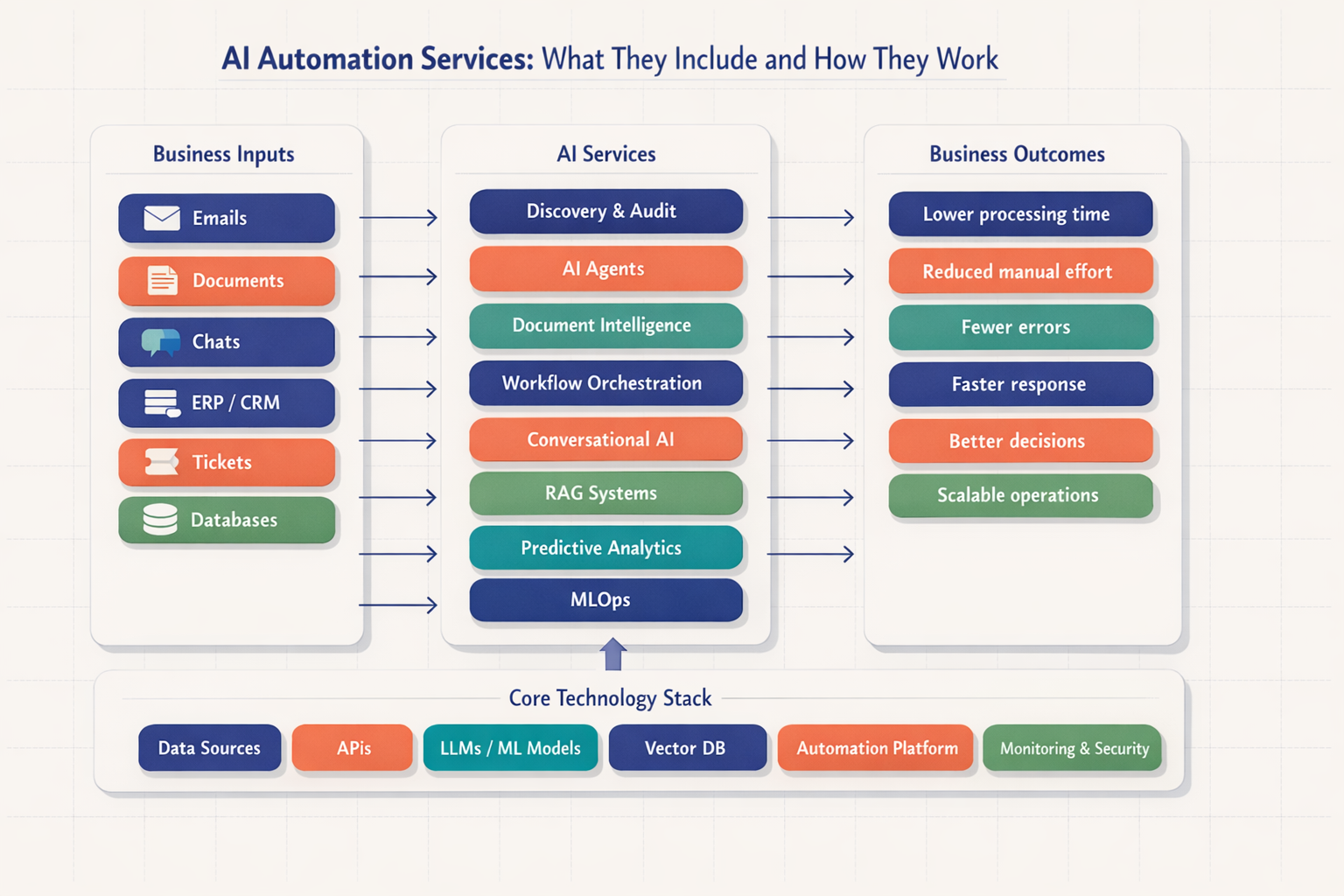

Concept map centered on AI Automation Services with branches for reading documents, making decisions, triggering actions, answering questions, and learning from data, plus a side-by-side comparison of AI automation versus basic rule-based automation

Concept map centered on AI Automation Services with branches for reading documents, making decisions, triggering actions, answering questions, and learning from data, plus a side-by-side comparison of AI automation versus basic rule-based automation

Business process automation with ai sits between classic workflow automation and standalone AI tools. It uses AI where judgment, language, prediction, or document interpretation is needed, then combines that with workflow engines, RPA, or APIs to complete the process end to end.

A practical definition helps: ai automation company services usually include five things:

Naresh HR approaches this from an engineering perspective shaped by backend architecture, DevOps, and reliability concerns at Imversion Technologies Pvt Ltd. Security should not be optional. Monitoring matters just as much as deployment.

Discovery is where serious ai automation services begin. Teams map current processes, review systems, inspect data quality, identify bottlenecks, and prioritize use cases by value, complexity, and risk. This phase often includes stakeholder workshops, process walkthroughs, system diagrams, and baseline KPI capture.

A practical example is invoice processing. The audit checks document formats, exception rates, approval routing, ERP integration points, and manual touchpoints. The result is not a vague idea. It is a delivery plan with scope, dependencies, and expected outcomes.

AI agents are task-performing software workers that can interpret context, decide the next action, call tools, and update systems across multiple steps. In ai process automation services, they are useful for triaging emails, updating CRM records, routing requests, and coordinating handoffs between departments.

A common business example is support ticket triage. An agent reads a ticket, identifies intent and urgency, checks account context through APIs, drafts a response, updates the helpdesk, and escalates when confidence is low. Good buying criteria include tool-use reliability, guardrails, auditability, and error handling.

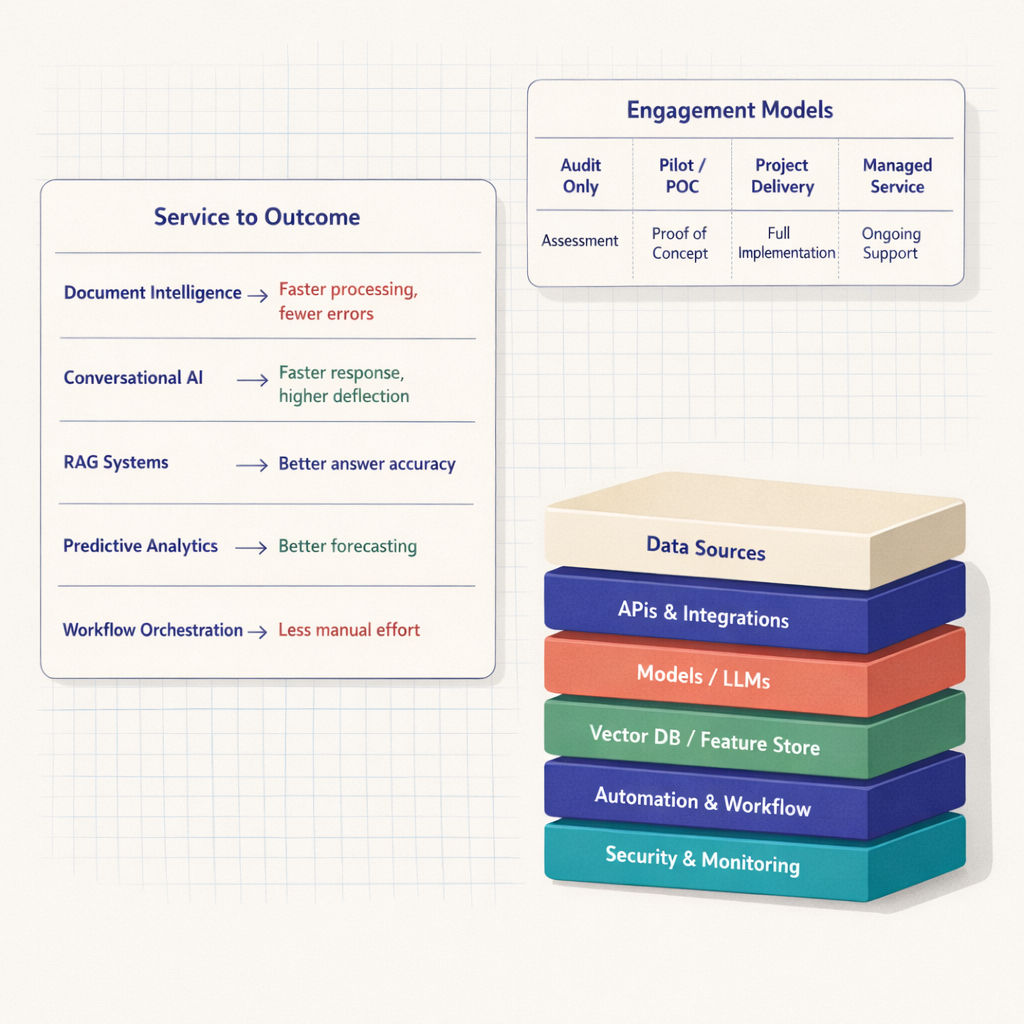

Document intelligence focuses on extracting, classifying, validating, and structuring information from documents such as invoices, contracts, KYC forms, claims, and purchase orders. The stack often includes OCR and document AI tools like AWS Textract, Azure AI Document Intelligence, Google Document AI, and custom validation logic.

This service fits early in many programs because the ROI is easy to understand. Manual entry drops. Processing speed improves. Consistency improves too. A claims-processing example is straightforward: extract claimant details, classify the form, validate against policy data, and send exceptions to a reviewer.

Workflow orchestration connects AI outputs to business execution. This is where intelligent automation services become operational. Models classify or generate information, but orchestration decides what happens next -- create a task, call an API, update ERP, notify Slack, open a case, or trigger human review.

Tools vary by environment: UiPath for RPA-heavy estates, n8n and Zapier for lightweight integrations, Camunda or Temporal for process orchestration, and Airflow for data-centric scheduling. A common example is lead processing: enrich data, score quality, route qualified leads to CRM, and alert sales automatically.

Conversational AI covers chatbots, voice bots, virtual assistants, and internal copilots. The service includes intent design, prompt engineering, integration with CRMs or helpdesks, escalation flows, analytics, and guardrails for tone, privacy, and compliance.

The practical example is an internal HR or IT helpdesk assistant. It answers policy questions, gathers request details, creates tickets, and hands over complex cases to humans. At Imversion Technologies Pvt Ltd, one repeated observation is that organizations get better results when conversational systems are tied to workflows instead of being left as isolated chat interfaces.

RAG systems use retrieval-augmented generation to ground AI responses in approved company knowledge. They retrieve relevant content from documents or databases before generating an answer, which improves factuality and control.

This service is ideal for internal knowledge assistants, policy lookup, customer support knowledge bases, and technical documentation search. The architecture usually includes chunking, embeddings, vector databases such as Pinecone, Weaviate, Qdrant, or pgvector, plus reranking and citation logic. A practical use case is a support assistant that answers from product manuals and SOPs instead of relying on model memory.

Predictive analytics services use machine learning to forecast outcomes such as churn, fraud risk, demand, maintenance needs, delinquency, or ticket volume. This category differs from generative automation. It is less about content generation and more about scoring and forecasting.

Where it fits is clear: prioritize action before a problem becomes expensive. A sales or retention example works well here. A model scores churn risk, the workflow assigns a save campaign, and dashboards track conversion and false-positive rates. Good buying criteria include data readiness, explainability, retraining strategy, and KPI alignment.

MLOps keeps models and AI workflows working after launch. This includes deployment pipelines, versioning, model registry, prompt and configuration management, evaluation, drift detection, rollback strategies, observability, and governance. Without this layer, many ai automation solutions stall after the pilot.

The practical example is a document pipeline whose extraction accuracy degrades as document formats change. MLOps catches the drift, flags confidence drops, routes more items to review, and triggers retraining or prompt updates. At Imversion Technologies Pvt Ltd, a common operational pattern is that teams underestimate prompt versioning, secret rotation, and environment parity until the first production release forces discipline. Consistency across environments is critical here. Docker, Kubernetes, CI/CD pipelines, feature flags, secret management, and production telemetry are not optional extras. They are operating basics.

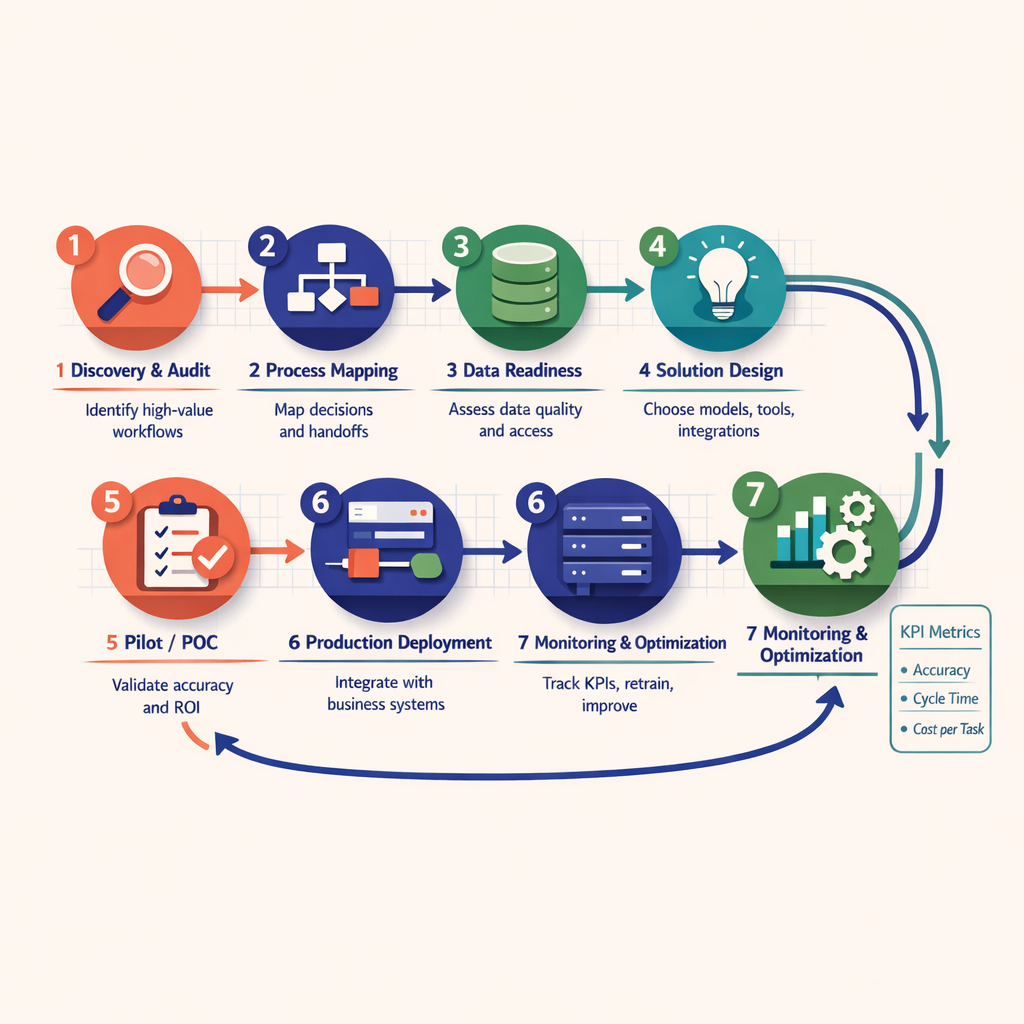

The delivery lifecycle starts by identifying the process, pain point, users, systems, and baseline metrics. Teams document current-state flow, exception paths, approval logic, and compliance requirements. Then they inspect the data -- structure, completeness, labeling quality, document variation, and access constraints.

This phase decides feasibility. Bad data, unclear ownership, or fragmented systems can kill momentum early. At Imversion Technologies Pvt Ltd, teams often find that the fastest path is not automating the whole process first. It is automating the highest-friction segment with clean handoffs. I have seen teams insist on a full end-to-end rollout, then reverse course once process mapping exposed three separate approval branches and two unofficial spreadsheets controlling the real workflow.

After discovery, architects define the target flow. Which steps need rules? Which need AI? Which need a human? Then comes model selection: OpenAI or Anthropic for language tasks, a specialized OCR engine for document extraction, a classical ML model for scoring, or a hybrid design that mixes all three.

The design should include:

Because production systems fail at the edges, not on demo screens.

Implementation connects the solution to real systems through APIs, message queues, databases, webhooks, or RPA where APIs do not exist. ERP, CRM, helpdesk, DMS, and email systems often need to be part of the flow. This is where many ai process automation services either become useful or turn brittle.

Testing should cover far more than accuracy. It must include latency, timeout handling, malformed inputs, permission errors, retry logic, cost boundaries, and fallback behavior. Human-in-the-loop controls are critical for high-risk tasks such as claims, financial approvals, or regulated communication.

Deployment is iterative. Start with a narrow workflow, limited audience, and strong monitoring. Then expand. Monitoring matters as much as deployment -- Naresh HR takes a hard position on that because silent failures create hidden operational damage. In backend and DevOps work, I keep coming back to the same lesson: a pipeline that deploys cleanly but hides queue buildup, API retries, or extraction confidence drops is not production-ready. Teams should track task success rate, confidence distribution, exception volume, SLA adherence, cost per task, and user override rates. If performance drifts, the system needs tuning, not denial.

Seven-step implementation flowchart showing Discovery and Audit, Process Mapping, Data Readiness, Solution Design, Pilot, Production Deployment, and Monitoring and Optimization, with directional arrows, KPI callouts, and a feedback loop from production back to optimization

Seven-step implementation flowchart showing Discovery and Audit, Process Mapping, Data Readiness, Solution Design, Pilot, Production Deployment, and Monitoring and Optimization, with directional arrows, KPI callouts, and a feedback loop from production back to optimization

The smartest buying decision starts with an outcome, not a tool. A company trying to cut manual entry should not begin with predictive analytics. A business trying to improve forecast accuracy should not start with a generic chatbot. Business process automation with ai works best when the service category matches the operating bottleneck.

| Service type | Ideal use cases | Expected outcomes | Common KPIs | Complexity |

|---|---|---|---|---|

| Document intelligence | Invoices, claims, forms, contracts | Lower processing time, fewer entry errors | Extraction accuracy, turnaround time, cost per document | Medium |

| AI agents | Ticket triage, CRM updates, request coordination | Less manual effort, faster routing | Auto-resolution rate, handling time, exception rate | Medium-High |

| Conversational AI + RAG | Customer support, internal knowledge assistants | Faster responses, better self-service | First response time, containment rate, CSAT | Medium |

| Predictive analytics | Churn, fraud, demand, maintenance | Better forecasting and prioritization | Precision, recall, forecast error, conversion lift | High |

| Workflow orchestration | Cross-system process execution | Stronger SLA performance and consistency | Cycle time, failed runs, handoff delays | Medium |

| MLOps | Ongoing model operations | Reliability, governance, stable scale | Drift rate, deployment frequency, rollback time | High |

For buyers evaluating ai automation company services, the practical sequence is simple:

That is how ai automation solutions move from pilot excitement to operational value.

Providers package ai automation company services in several ways. Advisory and audit-only engagements suit teams that need a roadmap, architecture review, process assessment, or vendor-neutral recommendations before spending on implementation. This model works well when internal engineering is strong.

Pilot or proof-of-concept engagements validate feasibility fast. They are useful when data quality is uncertain, stakeholders need confidence, or the use case involves new tooling such as LLM agents or RAG.

Project-based implementation is the standard delivery model for scoped workflows like invoice extraction, support triage, or CRM enrichment. It fits organizations with a defined process, budget, and deadline.

Dedicated team models make sense when the roadmap is broad and cross-functional. The provider supplies engineers, AI specialists, DevOps support, and product coordination over multiple releases.

Managed service or long-term support is best when optimization matters as much as launch. Intelligent automation services need monitoring, retraining, version control, cost management, and governance over time. At Imversion Technologies Pvt Ltd, a recurring pattern is that mature teams shift toward managed support once their first production automations start touching business-critical operations. The reason is simple -- once alerts, model updates, vendor API changes, and compliance checks become routine, ad hoc ownership stops working.

Comparison graphic matching document intelligence, conversational AI, RAG, predictive analytics, and workflow orchestration to business outcomes, alongside engagement models ranging from audit-only and pilot to project delivery, dedicated team, and managed service

Comparison graphic matching document intelligence, conversational AI, RAG, predictive analytics, and workflow orchestration to business outcomes, alongside engagement models ranging from audit-only and pilot to project delivery, dedicated team, and managed service

Production-grade ai automation services depend on a layered stack. Data comes from ERPs, CRMs, helpdesks, emails, file stores, and internal databases. APIs connect systems. LLMs from OpenAI or Anthropic handle language tasks. OCR and document AI tools such as AWS Textract or Azure AI Document Intelligence process files. Vector databases support RAG. Workflow layers like UiPath, n8n, Airflow, LangChain, or custom services coordinate execution.

Then comes the part that determines survival in production. Observability. Security. Deployment discipline. Logs, traces, dashboards, secret management, access control, containerization with Docker, orchestration with Kubernetes, cloud infrastructure on AWS or Azure, and CI/CD pipelines all matter.

Imversion Technologies Pvt Ltd treats stack choice as an engineering decision, not a trend decision. The wrong stack raises cost, weakens governance, and breaks under scale. The right one makes ai automation solutions reliable, secure, and maintainable.

You should prepare process documentation, sample inputs and outputs, access to the systems involved, baseline metrics, and a clear owner for the workflow. AI projects move faster when the business already knows where delays, exceptions, and handoffs happen, because the provider can design around real operational constraints instead of assumptions.

Most ai automation services show early results in 4 to 12 weeks when the scope is narrow and the systems are accessible. Document workflows, ticket triage, and internal knowledge assistants often produce faster wins than large cross-functional transformations that require heavy integration, change management, and governance review.

Companies should measure business impact, not just model accuracy. The strongest indicators are cycle time reduction, lower manual workload, fewer exceptions, improved SLA performance, reduced cost per transaction, and better user adoption. A technically accurate system is not successful if employees override it constantly or if downstream bottlenecks remain unchanged.

The main risks are poor data quality, weak integration design, unclear escalation rules, hidden compliance issues, and lack of ongoing monitoring. Many failures come from treating automation as a one-time deployment instead of an operating system that needs maintenance, version control, and accountability after launch.

A managed service is better when the workflow is business-critical, model behavior may drift, vendors change APIs frequently, or internal teams do not have time to monitor performance continuously. It is especially useful once automation affects customer communications, financial processing, compliance-sensitive tasks, or multiple departments at once.